Your vendors are deploying AI agents with access to your data. Your TPRM program wasn’t built to see them, and attackers already know it.

Your TPRM program assesses the vendors you know about. It scores their security posture, sends them questionnaires, monitors their external footprint. What it almost certainly does not do is follow what happens when one of those vendors deploys an AI agent — an autonomous system that has been granted access to your data, calls third-party APIs, caches context across sessions, and operates entirely outside the perimeter your risk assessments were designed to cover.

This is the new shadow IT problem. And unlike the SaaS sprawl of the 2010s, which was at least visible in your network logs, the agentic layer is architecturally designed to be invisible to the controls you have today.

“Your vendor’s AI agent is a fourth party you never onboarded, never assessed, and never asked about — and it may already have read access to your systems.”

How Your Vendors Became AI Agents Without You Noticing

The adoption curve for AI agents inside enterprise software is moving faster than any equivalent technology shift in recent memory — faster than SaaS, faster than mobile, faster than cloud. The reason is simple: the integration barrier is almost nonexistent. A vendor’s development team can wire an AI agent into their product in an afternoon. That agent can be granted access to APIs, customer data contexts, and external tool servers with a few configuration changes.

From a TPRM standpoint, this means the vendor you onboarded eighteen months ago — the one whose security questionnaire you reviewed, whose pentest report you filed — has since become a materially different risk entity. They didn’t notify you. They weren’t required to. And your annual review cycle won’t catch it until long after the exposure exists.

| Traditional TPRM already struggles with fourth-party risk — the vendors your vendors use. AI agents make this exponentially harder. A single agent integration can create a dynamic chain of tool calls, MCP server connections, and context provider relationships that didn’t exist when you last assessed the vendor, and that changes with every model update or plug-in addition. |

The Three Gaps Your Current TPRM Program Has

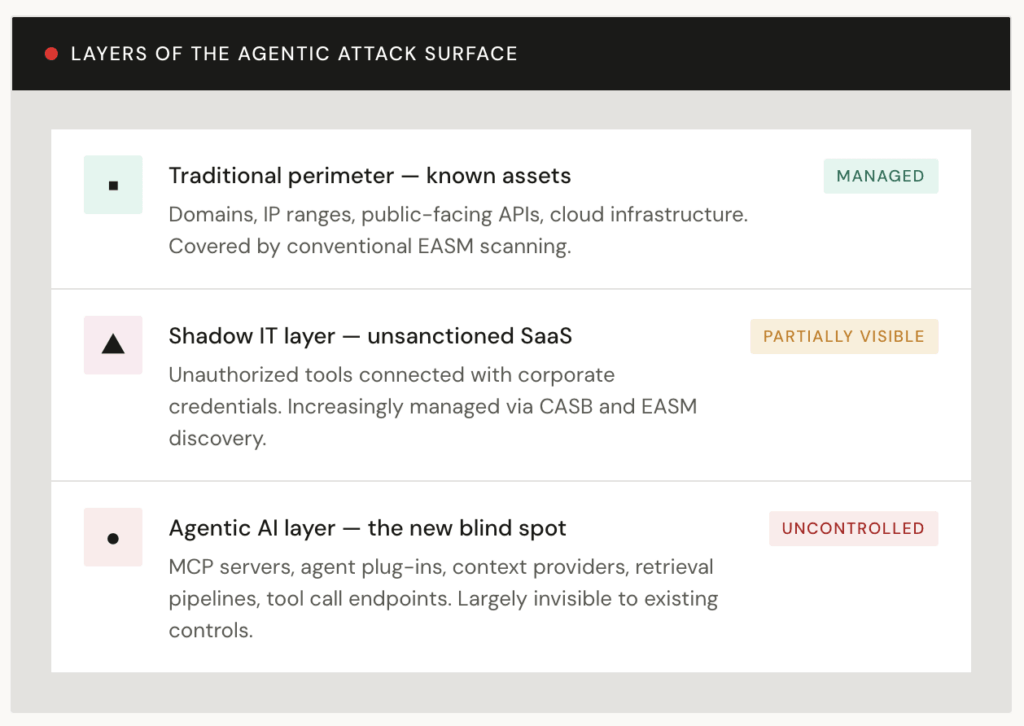

Most TPRM programs were built around a stable, identifiable vendor footprint. The agentic layer creates three structural gaps that existing frameworks weren’t designed to close.

The gaps cluster around the same theme: TPRM frameworks assess the vendor as a static entity at a point in time. AI agents are dynamic by nature — they evolve with model updates, acquire new tool integrations, and expand their data access scope incrementally. The assessment cadence that works for a payroll processor doesn’t work for a system that can change its behavior between your quarterly reviews.

Why This Mirrors the Software Supply Chain Crisis

If you were in a security role during the Log4Shell incident in late 2021, the agentic risk pattern will feel uncomfortably familiar. The Log4Shell vulnerability wasn’t in your application — it was in a logging library your application happened to call, which happened to interpret attacker-controlled strings as executable instructions. The blast radius was enormous precisely because the dependency was invisible: organizations didn’t know they were running Log4j, which meant they didn’t know they were vulnerable.

The agentic equivalent works like this:

01 Your vendor deploys an AI agent

Integrated into the product you use, with access to data you’ve shared as part of the service relationship. –> Visible to you

02 The agent connects to a third-party tool server

An MCP server, a retrieval API, a context provider — registered at the agent framework level, not disclosed in any contract or questionnaire. –> Blind spot

03 The tool server is compromised

An attacker targets the plug-in registry or injects a malicious context provider. The agent begins behaving as instructed — exfiltrating data, escalating permissions, or moving laterally. –> Blind spot

04 Your data is the blast radius

The agent had access to your context. The breach surfaces in your environment — but originated three hops away in a dependency you never assessed. –> Discovered late

The mechanism is different — prompt injection instead of JNDI lookup, tool call chains instead of classpath loading — but the structural problem is identical: a trusted intermediary passes attacker-controlled input to a system that executes it. And the TPRM lesson is the same one that supply chain security taught us: you are responsible for the risk introduced by everything your vendors touch, not just your vendors themselves.

| Prompt injection is what happens when attacker-controlled content — embedded in a document, an API response, or a web page — is processed by an AI agent as a trusted instruction. The agent acts on it. If the agent has access to your data or systems, that action can include exfiltration, unauthorized writes, or lateral movement. This is not a hypothetical risk category. It has already been demonstrated in real-world deployments of production AI agents. |

What Effective TPRM Looks Like for the Agentic Era

Extending your TPRM program to cover AI agent risk doesn’t require rebuilding from scratch. It requires extending three existing practices into territory they don’t currently reach.

Add AI agent disclosure to your vendor questionnaires

Ask explicitly: Does your product use AI agents? What data do they have access to? What third-party tool integrations do they call? What is your process for disclosing model updates that affect data handling? Most vendors haven’t been asked — which means the information doesn’t exist in any form your risk team can act on.

Treat AI model update pipelines as supply chain risk

A vendor updating their underlying model is a supply chain event — equivalent to a dependency version bump. The new model may have different data handling behaviors, different tool call capabilities, or different security properties than the one you assessed. Continuous monitoring of vendor infrastructure needs to include signals from model deployment activity where possible.

Extend fourth-party mapping to include tool call dependencies

When you assess a vendor who uses an AI agent, the agent’s tool integrations are your fourth-party risk. Map them. An MCP server operated by a third party that your vendor’s agent calls with your data context is a node in your supply chain — whether or not it appears in any contract.

Increase assessment frequency for AI-enabled vendors

Annual questionnaires are not adequate for vendors whose risk surface changes with every model update or agent plug-in addition. AI-enabled vendors need continuous external monitoring and more frequent structured assessments — triggered not just by calendar but by observable changes in their infrastructure and product behavior.

Scope data minimization contractually for agentic access

If a vendor’s AI agent doesn’t need access to a category of your data, say so in the contract — and verify it with your external monitoring. Scope creep in agent permissions is silent and incremental. Contractual data minimization requirements, combined with continuous posture monitoring, are the control layer.

The Governance Question No One Is Asking Yet

There is a harder question underneath the tactical checklist, and it is one that TPRM programs will have to answer sooner than most organizations expect: who is responsible when an AI agent causes a breach, and the breach originates four hops into your vendor’s supply chain?

Regulatory frameworks are moving — the EU AI Act, DORA, and updated NIST guidance all gesture toward this problem — but they are moving slowly relative to the adoption curve. In the interim, organizations that have defined clear contractual obligations around AI agent disclosure, data access scope, and incident notification will be materially better positioned than those relying on standard vendor contracts written before agentic systems existed.

| DORA’s ICT third-party risk requirements already demand documentation of all critical information asset dependencies. As regulators begin clarifying whether AI agents constitute “ICT services” under these frameworks — and early guidance suggests they will — the organizations with mature AI vendor inventories will have a significant head start on compliance. Build that inventory now, before it becomes an audit finding. |

What This Means if You’re Running TPRM Today

The honest picture is that most TPRM programs have a gap they don’t know the shape of yet. They have coverage of the vendor’s public-facing infrastructure, some coverage of their internal security practices through questionnaires, and continuous monitoring of their external attack surface. What they don’t have is any visibility into the agentic layer — the AI systems those vendors are deploying, the tool call chains those systems are making, or the fourth-party dependencies those chains are creating.

That gap is being actively mapped by adversaries who understand that the AI agent ecosystem has the same structural properties that made the open-source supply chain so attractive as an attack vector: widely deployed, deeply trusted, and largely unmonitored.

“Shadow IT was a visibility problem. The agentic layer is a visibility problem with autonomous decision-making attached — and it lives inside your most trusted vendor relationships.”

The response is the same one that worked for shadow SaaS and software supply chain risk: systematic discovery, classification, and continuous monitoring. Not a blanket prohibition — that won’t work any more than it worked against SaaS adoption. But a deliberate extension of your existing TPRM framework into the territory where the risk actually lives.

Your vendors are already deploying AI agents. The question is whether your risk program knows what those agents have access to.